📍 Part 4 of 8 · Becoming Agent-Native

An 8-part series on going from delivery team to agent-native organization – lessons earned, not borrowed.

Genesis · Anxiety · Names Matter · → Proof of Value · The Pivot · Co-Creation · The Garage · The Flywheel

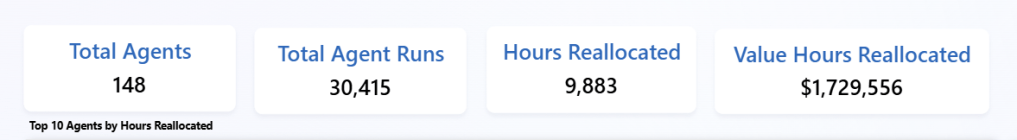

Early in Phase 2, before we knew if people would even use these agents, we built a usage dashboard and a small component that every agent had to include – Power Automate, Copilot Studio, M365 Copilot – it was table stakes to onboard.

It felt a bit like overhead at the time.

It became the foundation of everything.

What the dashboard tracked: which agents were being used, how often, by whom, and with what outcome.

Simple. But surprisingly revealing.

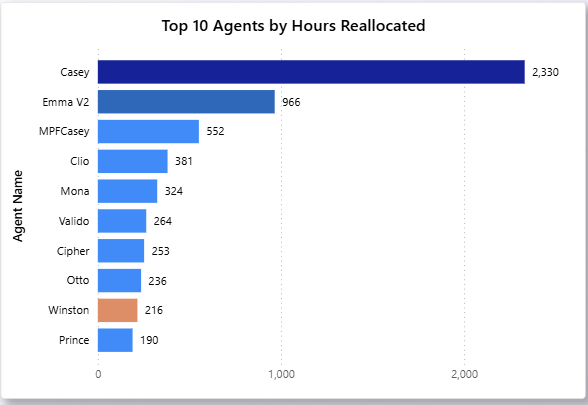

Some agents were hits. Usage climbed. Feedback was positive. The team became genuinely dependent on them. These got investment: more features, deeper integration, wider rollout.

Some were mediocre. Usage below expectation but not zero. The dashboard made us ask the right question: is the agent underperforming, or is there an onboarding gap? Is there a better design? Those are different problems. You can’t diagnose without the data.

Some just didn’t work out. And the dashboard gave us permission to retire them. No politics, no ego, just “the numbers say this isn’t earning its place.”

The data showed us insight about people, not just agents.

We saw a clear split emerge: pro users and skeptics.

Some team members were all in. They used agents daily, sending feedback all the time, acting like internal product managers for the agents they’d adopted. Others were lukewarm.

That visibility mattered. It let us find the right internal champions. It let us understand the gap between those two groups. It let us have a business conversation, with real numbers, about what was working.

ROI reporting doesn’t only justify the investment. It shows your team you’re taking this seriously. And them.

But the most important proof never showed up in the dashboard.

It was the moments.

The team member who realized they hadn’t manually changed that case status in weeks. Not because they forgot, but because Theo handled it.

The person who got their Friday afternoon back because George was doing the weekly summary.

The quiet relief of: oh, that’s just handled now.

When those moments accumulate, something shifts. The agent stops being an experiment and starts being infrastructure. The dashboard tracks the what. The moments explain why it matters.

If I were advising someone starting this today, I’d say: Build the measurement layer early, at the business group level, not deep in IT.

Once you have 10 agents and a skeptic asking “what’s the ROI on all this?” you’ll be very glad you have an answer.

“Measure early. The dashboard will make decisions for you that would otherwise become arguments.”

Next: The inflection point, when the team stopped worrying about agents and started wanting more of them.